About

-

1921

Rorschach test was created

Hermann Rorschach created the Rorschach test in 1921 as a psychological test in which subjects' perceptions of inkblots are recorded and then analyzed using psychological interpretation to examine a person's personality characteristics and emotional functioning.

-

1956

Artificial Intelligence is Born

The summer of 1956 brings Marvin Minsky and other brilliant minds together at Darthmouth College. In an explosion of creativity, they plant the seeds of what Artificial Intelligence would become.

-

1960

Psycho

Alfred Hitchcock directed the most celebrated psychological horror film, Psycho, that centers on the encounter between a secretary who ends up at a secluded motel and the motel's disturbed owner-manager, Norman Bates.

-

2015

Black Box Society

Frank Pasquale wrote The Black Box Society that highlights the dangers of runaway data, black box algorithms and machine learning bias caused by source data.

-

2016

AI-Powered Horror Imagery

In 2016, we presented the Nightmare Machine: AI-generated scary imagery, where we collected over 2 million votes from people all over the world. Nightmare Machine is among the first AI projects that tackles a specific challenge: can AI not only detect but induce extreme emotions (such as fear) in humans? (read more about the Nightmare Machine on media including The Washington Post, The Boston Globe, The Atlantic, FiveThirtyEight and more!)

-

2017

AI-Powered Horror Stories

In 2017, we presented Shelley: the world's first collaborative AI Horror Writer! Shelley is a deep-learning powered AI who was raised reading eerie stories collected from r/nosleep. She wrote over 200 horror stories collaboratively with humans, by learning from their nightmarish ideas, and creating the best scary tales ever. Visit Shelley.ai to browse first AI-Human horror anthology ever put together!

-

2017

AI-Powered Empathy

In 2017, we worked on the other side of the spectrum and presented Deep Empathy. Deep Empathy explores whether AI can increase empathy for victims of far-away disasters by creating images that simulate disasters closer to home.

-

April 1, 2018

AI-Powered Psychopath

We present you Norman, world's first psychopath AI. Norman is born from the fact that the data that is used to teach a machine learning algorithm can significantly influence its behavior. So when people talk about AI algorithms being biased and unfair, the culprit is often not the algorithm itself, but the biased data that was fed to it. The same method can see very different things in an image, even sick things, if trained on the wrong (or, the right!) data set. Norman suffered from extended exposure to the darkest corners of Reddit, and represents a case study on the dangers of Artificial Intelligence gone wrong when biased data is used in machine learning algorithms.

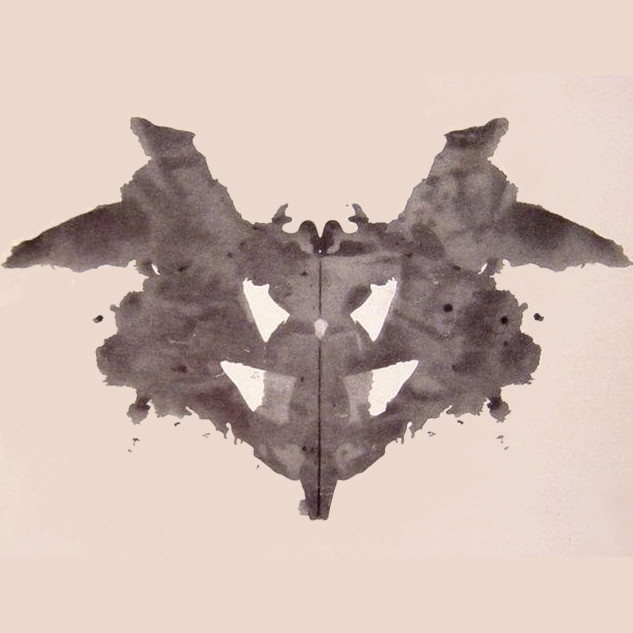

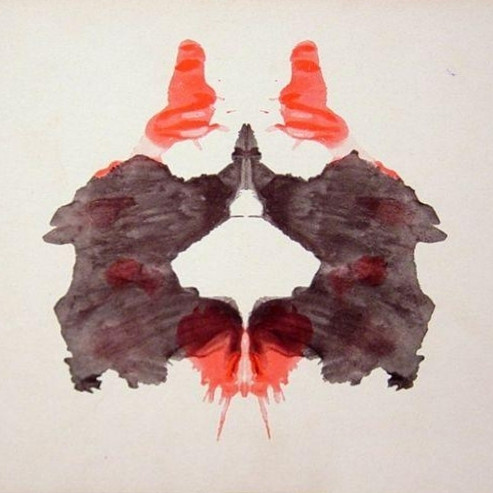

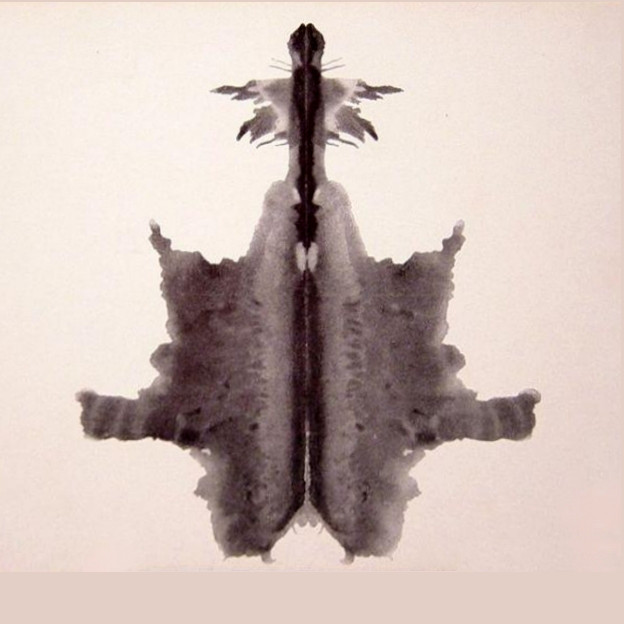

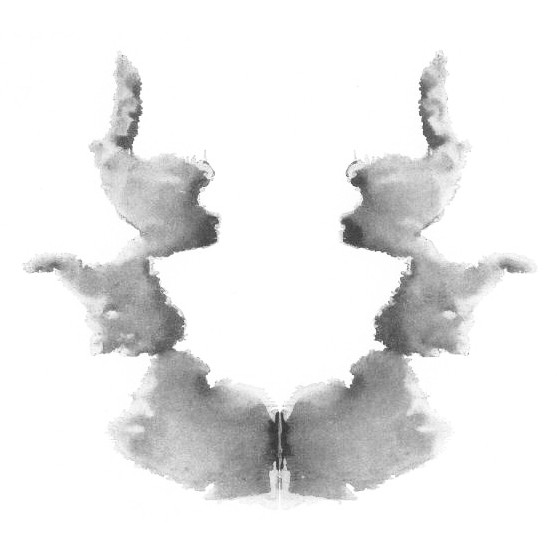

Norman is an AI that is trained to perform image captioning; a popular deep learning method of generating a textual description of an image. We trained Norman on image captions from an infamous subreddit (the name is redacted due to its graphic content) that is dedicated to document and observe the disturbing reality of death. Then, we compared Norman's responses with a standard image captioning neural network (trained on MSCOCO dataset) on Rorschach inkblots; a test that is used to detect underlying thought disorders.

Note: Due to the ethical concerns, we only introduced bias in terms of image captions from the subreddit which are later matched with randomly generated inkblots (therefore, no image of a real person dying was utilized in this experiment).

Browse what Norman sees, or help Norman to fix himself by taking our survey.